Case Media

Case Notes

This page keeps the media, full prompt, and original source together so you can inspect the result first and decide whether the prompt is worth copying, saving, or comparing.

Case Insights

To make this page easier to search, cite, and reuse later, the case is also broken down into practical guidance about usage, visual cues, and prompt structure.

Best Fit Scenarios

- Use this as a ui & social screens benchmark when you need a fast style baseline before rewriting your own prompt.

- It is especially helpful if your target overlaps with UI, Screenshot, UI & Social Screens and you want to judge the image result before tuning wording.

- Keep it as a control sample when you compare nearby prompt variants one variable at a time.

Visual Signals To Notice

- The clearest style signals here are UI, Screenshot, UI & Social Screens, so those should usually stay in your first rewrite.

- The important layer is usually interface density, card hierarchy, and how the screen tells the story before you read small text.

- This case keeps one primary output, so the first image should be treated as the main visual reference.

How The Prompt Is Structured

- The prompt reads as a long, highly specified prompt, which is useful when you want to judge how much specificity this direction needs.

- Its keyword cluster is centered on UI, Screenshot, UI & Social Screens, so you can usually keep that cluster while swapping subject, camera, layout, or copy details.

- A practical rewrite path is: keep the outcome, keep the strongest style cues, then replace only the subject and environment blocks.

Good Follow-up Questions

- What changes first if you keep UI, Screenshot, UI & Social Screens but switch the subject matter?

- Which part of the result comes from section-level structure (UI & Social Screens) versus tag-level style cues?

- Which related cases in the same section give you a cleaner or more extreme variation of the same direction?

Full Prompt

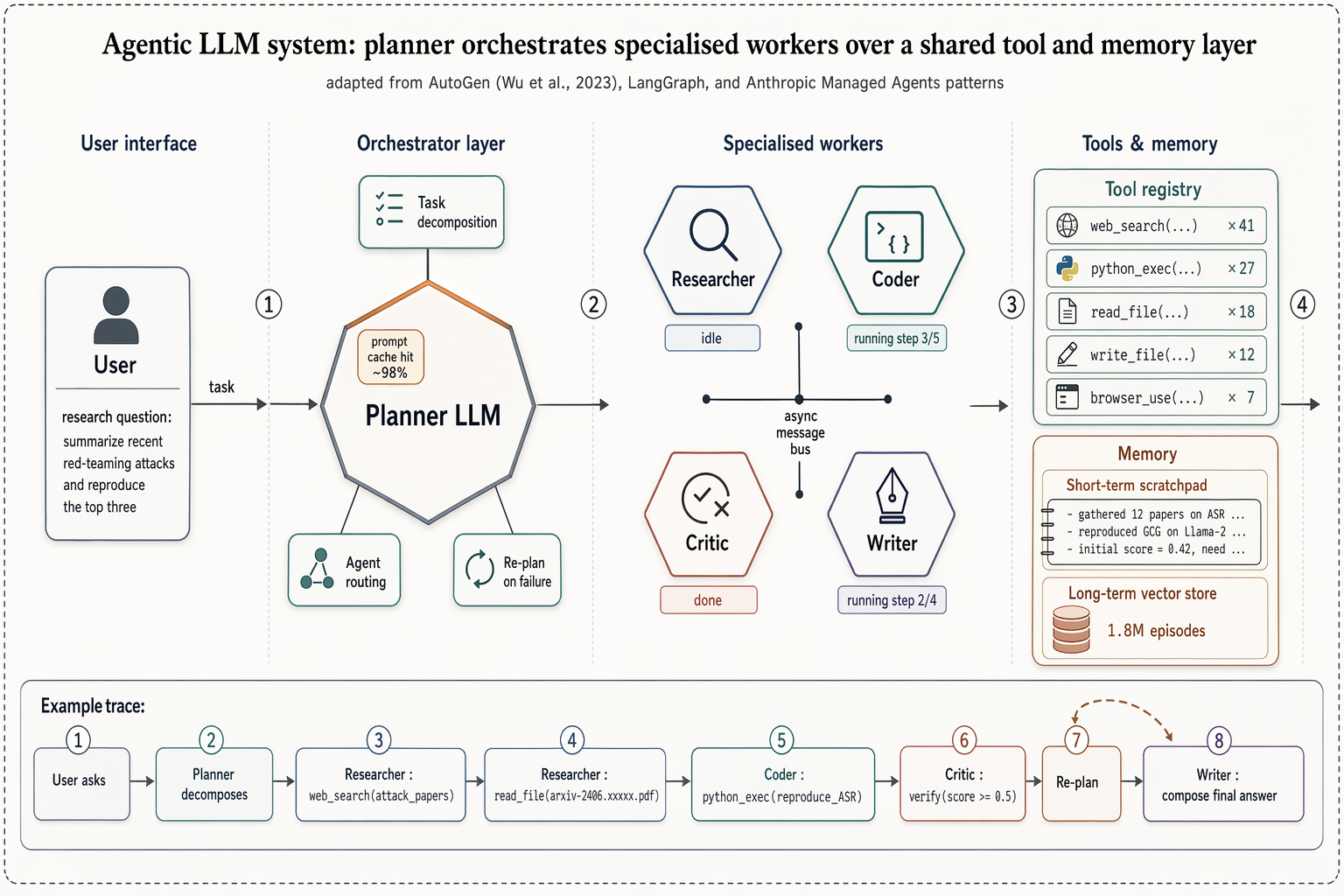

Landscape 16:9 high-fidelity systems figure of a multi-agent LLM architecture, in the style of a richly detailed AutoGen / LangGraph / Anthropic Managed Agents Figure 1. Subtle drop-shadows, warm-copper highlights, numbered flow markers ①②③④. ZONE 1 — "User interface": rounded user box with placeholder task "research question: summarize recent red-teaming attacks and reproduce the top three". ZONE 2 — "Orchestrator layer": central hexagonal hub "Planner LLM" with warm-copper top edge. Three satellite chips: "Task decomposition", "Agent routing", "Re-plan on failure". Small inset chip "prompt cache hit ~98%". ZONE 3 — "Specialised workers": 2×2 hexagons "Researcher" / "Coder" / "Critic" / "Writer", each with glyph + status ribbon ("idle", "running step 3/5", "done", "running step 2/4"). Centre labeled "async message bus". ZONE 4 — "Tools & memory": (a) "Tool registry" panel listing "web_search ×41", "python_exec ×27", "read_file ×18", "write_file ×12", "browser_use ×7"; (b) "Memory" panel with "Short-term scratchpad" and cylinder "Long-term vector store — 1.8M episodes". Bottom inset "Example trace": 8-step horizontal timeline chips from "User asks" through "Planner decomposes", "Researcher: web_search(...)", "Coder: python_exec(...)", "Critic: verify", "Re-plan" (loop-back arrow), "Writer: compose final answer". Title: "Agentic LLM system: planner orchestrates specialised workers over a shared tool and memory layer". Subtitle: "adapted from AutoGen (Wu et al., 2023), LangGraph, and Anthropic Managed Agents patterns".